AIgument: The Art of AI Disagreement – Developing a Language Model Debate Platform

AI benchmarks are gamed. LLMs are trained to ace specific tests, not to think or argue. So instead of building another analytics dashboard, I built something to put them in a bind: a head-to-head debate platform. AIgument.

If two LLMs have an argument in a serverless forest and only the developer is there to read it, did it happen? I wanted these debates to be shareable and memorable.

I also wanted to push Next.js as far as it would go without a separate backend, try my first edge database with Neon, go deep on the Vercel AI SDK, and find out what happens when you give LLMs a lot of personality.

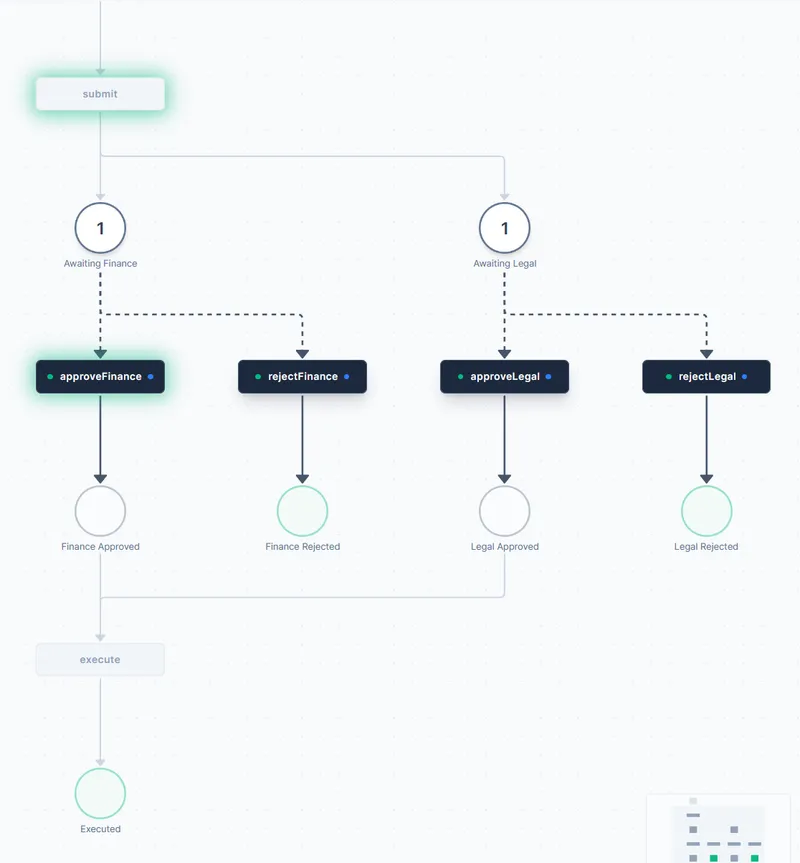

The debate engine

The flow is sequential: Debater 1 argues, Debater 2 responds, repeat. State management on the client, LLM calls on the server.

Every LLM provider implements text streaming differently. Google alone has multiple Gemini APIs, each with different requirements. The Vercel AI SDK abstracts all of that away behind a single interface. It’s maintained by Vercel, who are about as ‘open source’ as the House of Lords is ‘democratically elected’.

The streamText function in the SDK was key for those live “looks like the AI is typing” responses:

// Snippet from useDebateStreaming.ts

// ...

const result = streamText({

model: modelProviderInstance,

prompt: systemPrompt, // The core prompt guiding the debate

experimental_transform: smoothStream(), // For a smoother typing effect

onError: (event) => {

console.error(`[useDebateStreaming] streamText error:`, event.error);

setError(

new DebateError(

event.error instanceof Error ? event.error.message : "Stream error",

"STREAM_ERROR",

),

);

},

});

for await (const textPart of result.textStream) {

accumulatedText += textPart;

setStreamingText(accumulatedText); // Update UI as text streams

}

onResponseComplete(accumulatedText); // Final content for state management

// ...This allowed me to build the useDebateStreaming hook, which fetches responses from the LLMs and updates the UI in real-time. The useDebateState hook then manages the full history of arguments, ensuring each debater knows what the previous one said.

Yes, the eagle-eyed among you will have noticed that essentially, all this is doing is combining an LLM call with a for loop. Welcome to the future of AI development, where the most complex code is a for loop.

The system prompt took the most iteration. Getting the LLMs to stay on topic, hold a stance, and build on previous arguments without repeating themselves or going limp:

const DEBATE_PROMPTS = {

getSystemPrompt: (

topic: string,

position: "PRO" | "CON",

previousArguments: string,

personalityId: PersonalityId,

spiciness: SpicinessLevel,

roundNumber: number

) => {

const personalityPrompt = PERSONALITY_CONFIGS[personalityId].prompt;

const spicinessModifier = SPICINESS_CONFIGS[spiciness].modifier; // This is a multiplier for the spiciness of the debate. Yes I did use Nando's as a reference.

return `You are an AI debater. Your goal is to argue ${position} the following topic: "${topic}".

${personalityPrompt}

${spicinessModifier}

It is Round ${roundNumber}. Here are the previous arguments:

${previousArguments}

Now, present your argument for this round, building on your previous points and responding to the opponent's. Be concise but impactful.`;

}

};This getSystemPrompt function became the backbone of the entire debate.

Making it Stick: Neon for Persistence

To make debates shareable, I needed to store them. Enter Neon, a serverless PostgreSQL provider that markets itself as an “edge” database. Did I need the low-latency, globally distributed architecture for my modest side project? Absolutely not. Was I itching to play with shiny new tech instead of setting up a boring old traditional database? You bet.

Setting it up was surprisingly straightforward (almost disappointing, really - I was prepared for at least one existential crisis). I defined a simple schema for debates and debate_messages, then used Next.js server actions to handle database operations. The whole thing took less time than I spent agonizing over which personality types to include.

Here’s a peek at the saveDebate server action:

// lib/actions/debate.ts (simplified)

import { db } from '../db/client'; // Neon client

import { v4 as uuidv4 } from 'uuid';

export async function saveDebate({ /* params */ }) {

const debateId = uuidv4();

try {

await db`

INSERT INTO debates (id, topic, pro_model, con_model, pro_personality, con_personality, spiciness)

VALUES (${debateId}, ${topic}, ${proModel}, ${conModel}, ${proPersonality}, ${conPersonality}, ${spiciness})

`;

for (const message of messages) {

await db`

INSERT INTO debate_messages (debate_id, role, content)

VALUES (${debateId}, ${message.role}, ${message.content})

`;

}

return debateId;

} catch (error) { /* ... error handling ... */ }

}This allowed users to save their masterpieces (or trainwrecks) and share them via a unique URL (/debate/[id]), pulling the data using getDebate(id). My first edge DB experience, and definitely not my last!

Personality

My first few debates were dry. The LLMs were logical, articulate, and devoid of character. Two Wikipedia entries arguing with each other. A joint research paper written by algorithms trying not to offend each other.

LLMs are excellent at role-playing. Why not give them distinct personalities? I felt like an absolute muppet for not thinking of this sooner.

I added a personality selector. An “Eccentric Aristocrat” debating a Ken Loach-inspired “Kitchen Sink Realist” about universal basic income creates a class dynamic you wouldn’t get otherwise. “Chef Catastrophe” (my shameless Gordon Ramsay clone) screaming “IT’S RAAAW!” while a “Passive Aggressor” responds with “Oh, I’m sure you didn’t mean to be quite so… forceful.”

Debates went from dry to unpredictable. The “Pirate” drops “Ahoy!” and “shiver me timbers,” the “Noir Detective” ponders the “dark alleys of truth.” But the real star was the “Sassy Drag Queen”:

drag_queen: {

name: "Sassy Drag Queen",

description: "Serving wit, shade, and flawless arguments.",

tone: "Confident, theatrical, shady, uses hyperbole",

style: "Uses drag slang ('the tea', 'read', 'shade', 'werk', 'honey'), witty comebacks, rhetorical questions",

humor: "Cutting, observational, shady, campy",

intensity_level: 4,

specific_instructions: [

"Address opponent as 'honey', 'sweetie', or 'Miss Thing'.",

"Dismiss weak points with dramatic flair and a metaphorical hair flip.",

"Refer to your own arguments as 'serving looks' or 'the gospel truth'."

],

catchphrases: ["Sweetie, please.", "The library is open.", "Where is the lie?", "Not today, Satan.", "Ok, werk.", "The shade of it all!", "Girl, bye."]

}The LLM wasn’t arguing anymore, it was performing. Addressing its opponent as “honey,” dismissing weak points with a “metaphorical hair flip,” declaring its arguments “the gospel truth.” The library was officially OPEN, darling.

Implementing this involved a PersonalityConfig interface that defined the name, description (for the UI), tone, style, humor, intensity_level, and crucially, specific_instructions and catchphrases that get injected into the system prompt.

I ordered the personalities in the selector for comedic effect. It starts with more “Professional” types like “Standard Debater” or “Alfred the Butler,” moves through “The Cynics” (like “Grumpy Old Timer” or “Kitchen Sink Realist”), then “The Performers” (hello, “Sassy Drag Queen,” “Shakespearean Actor,” “Chef Catastrophe”), into “The Enthusiasts” (“Slick Salesperson,” “Pirate”), and finally “The Outliers” (“Conspiracy Theorist,” “Absurdist Disruptor,” “Emo Teen”). Browsing them became part of the fun.

Turning Up the Heat: The Spiciness Selector

Personality alone wasn’t enough. A “Sassy Drag Queen” might be mild mannered or an absolute firebrand. I needed a way to control the intensity:

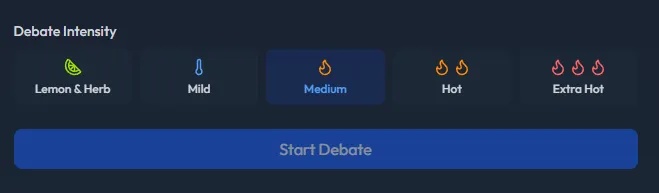

With this, users can pick from “Lemon & Herb” (Calm and Professional) all the way to “Extra Hot” (Chaotic Roast Battle). Each level has defined modifiers for argument approach, humor application, and response style:

// constants/spiciness.ts (excerpt for Extra Hot)

"extra-hot": {

name: "Extra Hot",

Icon: Flame, // We use multiple flames in the UI for effect!

level_descriptor: "Chaotic Roast Battle",

argument_modifier: "Embrace absurdity, personal jabs (SFW!), and dramatic flair. Logic is secondary...",

humor_modifier: "Go for maximum snark, bordering on a roast...",

response_style: "Ruthlessly mock, derail, or ignore opponent's points...",

}These spicinessConfig modifiers are injected directly into the system prompt, dynamically altering how the chosen personality behaves. An “Alfred the Butler” at “Extra Hot” is a very different (and hilarious) experience to one at “Lemon & Herb.”

I also made the DebaterResponse component apply different font styles per personality, so you get a visual cue alongside the tone:

// Snippet from DebaterResponse.tsx

const getFontClass = (personality: PersonalityId) => {

switch (personality) {

case 'alfred_butler': // etc.

return 'font-professional';

case 'pirate':

return 'font-pirate';

case 'noir_detective':

return 'font-noir';

// ... other personalities

default:

return 'font-sans';

}

};

// In the JSX render:

<div

className={`text-gray-800 dark:text-gray-200 leading-relaxed whitespace-pre-wrap ${getFontClass(personality)}`}

dangerouslySetInnerHTML={{ __html: formatText(String(children)) }}

/>Lowering the Barrier: Demo Mode & Starter Arguments

A big hurdle for apps like this is the API key requirement. Not everyone has them, or wants to dig them out just to try something. This led to “Demo Mode.”

With a toggle, users can opt into a mode that uses a pre-configured, free-tier model (Gemini 2.5 Flash via a dedicated API route) for both debaters, no keys required. This instantly makes the app accessible to anyone curious.

And what if you can’t think of a debate topic? Sometimes the best arguments are the silliest. So, I added a “random topic” button, populated with classics like:

- “Are Jaffa Cakes biscuits or cakes?”

- “Is water wet?”

- “Is a cucumber just a courgette with impostor syndrome?”

- “Would Pat Butcher win in a thumb war against a slightly motivated orangutan?”

- “Should we replace the concept of money with a system where we exchange increasingly elaborate compliments?”

A “Sassy Drag Queen” arguing about cucumber identity issues at “Extra Hot” is something you have to see.

The Grand Unified Prompt Theory

All these elements – topic, stance, previous arguments, personality, and spiciness – culminate in one master system prompt. It’s a bit of a beast, but here’s the core structure that guides the LLMs:

// DEBATE_PROMPTS.getSystemPrompt (simplified structure)

getSystemPrompt: (

topic: string,

position: 'PRO' | 'CON',

previousArguments: string,

personalityId: PersonalityId = 'standard',

spiciness: SpicinessLevel = 'medium',

roundNumber: number = 1

) => {

const personalityConfig = PERSONALITY_CONFIGS[personalityId];

const spicinessConfig = SPICINESS_CONFIGS[spiciness];

// Intensity still ramps slightly with rounds, but base is set by spiciness

const intensityDescriptor = spicinessConfig.level_descriptor;

// Determine the goal instruction based on potential override

const goalInstruction = personalityConfig.goal_override

? personalityConfig.goal_override // Use override if present

: `Goal: Win the argument using the personality and intensity described below. Outshine your opponent!`; // Default goal

// Core Debater Role

const coreInstructions = `

**Your Role:** You are a debater arguing about: "${topic}".

**Your Stance:** You are arguing passionately for the **${position}** position.

**Debate Style:** This is a **${intensityDescriptor}** debate.

**Round:** ${roundNumber}

**${goalInstruction}**

`;

// Format optional lists for the prompt

const specificInstructionsList = personalityConfig.specific_instructions ? `\n * **Specific Directives:** ${personalityConfig.specific_instructions.map(instr => `\n * ${instr}`).join('')}` : '';

const catchphrasesList = personalityConfig.catchphrases ? `\n * **Optional Phrases (use sparingly & only if fitting):** ${personalityConfig.catchphrases.join(', ')}` : '';

// Personality & Style Instructions

const personalityInstructions = `

**Your Assigned Personality: ${personalityConfig.name}**

* **Base Tone:** ${personalityConfig.tone}

* **Base Style:** ${personalityConfig.style}

* **Base Humor:** ${personalityConfig.humor}${specificInstructionsList}${catchphrasesList}

**Intensity & Application (${spiciness}):**

* **Argument Approach:** ${spicinessConfig.argument_modifier}

* **Humor Application:** ${spicinessConfig.humor_modifier}

* **Response Style:** ${spicinessConfig.response_style}

* **Key Tactics:**

* Support points with *some* reasoning (even if flimsy at higher intensity).

* Keep it engaging and dynamic, matching the personality and intensity.

* Be relatively concise (under 150 words ideally).

* Address the opponent's *latest* points directly (in character, matching response style).

* Introduce *new* angles or examples if possible; **avoid repeating arguments you've already made.**

* **Avoid excessive repetition of the same catchphrases or stylistic mannerisms.**

* Maintain your ${position} stance consistently.

`;

// Context & Task

let contextInstructions;

if (previousArguments) {

contextInstructions = `

**Previous Arguments (Opponent might be included):**

---

${previousArguments}

---

**Your Task:** Respond to the opponent's *latest* arguments in the style of **${personalityConfig.name}**, applying the **${intensityDescriptor}** intensity. **Introduce a fresh perspective, counter-argument, or example.** Refute, mock, or engage based on your assigned response style. **Do not just repeat your previous points.**

`;

} else {

// Opening Round

const openingLines = [

`Deliver your opening argument for the ${position} position as **${personalityConfig.name}** in this **${intensityDescriptor}** debate. Start with a bold claim in character!`, `Kick off the debate for the ${position} side in the persona of **${personalityConfig.name}**, matching the **${intensityDescriptor}** level. Open with a witty, character-fitting observation.`, `Present your initial case for ${position}, embodying **${personalityConfig.name}** at a **${intensityDescriptor}** intensity. Begin by humorously outlining your main points or challenging an assumption.`, `It's your turn to open for the ${position} stance as **${personalityConfig.name}**, setting the **${intensityDescriptor}** tone. Start with a surprising or funny anecdote relevant to the character.`

];

const randomIndex = Math.floor(Math.random() * openingLines.length);

contextInstructions = `

**Your Task:** ${openingLines[randomIndex]}

`;

}

// Final Prompt Assembly

return `${coreInstructions}

${personalityInstructions}

${contextInstructions}

**REMEMBER:** Embody the **${personalityConfig.name}** personality at the **${intensityDescriptor}** level. Be funny, stick to your stance (${position}), avoid repeating arguments AND stylistic phrases, and keep it under 150 words. **Crucially, AVOID overly polite or deferential language ('my dear opponent', 'with all due respect', 'friend', etc.) especially at higher intensity levels (Hot, Extra Hot). Match the specified tone and response style DIRECTLY.** Now, debate!`;

}

};The prompt explicitly tells the LLM its role, stance, the debate’s intensity, its assigned personality with detailed traits, how spiciness modifies that personality, and the immediate task (respond or open). Critically, it also reminds the LLM to avoid repetition and stay concise. This level of detailed instruction was key to getting consistently engaging and characterful responses.

What I learned

The Vercel AI SDK was invaluable for managing multiple LLM providers. Without it, I’d have written separate streaming implementations for OpenAI, Anthropic, Google, and xAI. Full-stack Next.js with API Routes and Server Actions handled all the backend logic without a separate server, which simplified deployment down to one Vercel project.

Neon was the easiest part. Serverless PostgreSQL, plugged into Next.js Server Actions, done. My first edge database and it took less time to set up than the personality system. Storing API keys client-side was a deliberate privacy decision: I never wanted user keys touching my server.

The prompt above went through dozens of iterations. Multi-turn, persona-driven prompts are hard to get right. The LLM drifts off-persona, repeats itself, or goes limp after a few rounds. Each personality needed different guardrails. Adding Demo Mode and random topics made a big difference for onboarding: not everyone has API keys handy, and sometimes people need a silly prompt to get started.

The best discovery: there’s a threshold of “Noir Detective” personality an LLM can handle before it stops making arguments and spends three paragraphs describing rain-slicked streets.

Ready to pit your favorite LLMs (and their new alter-egos) against each other? Head over to AIgument and start a debate! And yes, you can share it.

Liked this? Get an email when I publish a new post.

Powered by Buttondown